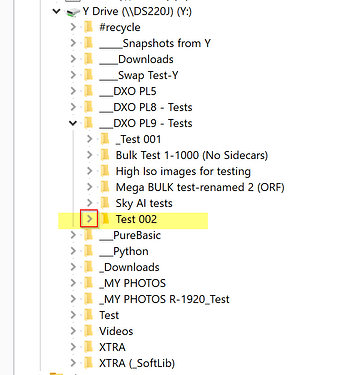

That is why you ought to follow “best practise” and avoid really big folders with more than 1000 pictures (and especially not RAW-pictures) and rather use an external DAM or a File Viewer like XnView that prepare the previews of a smaller size in advance - it normally does that just once and not everytime it opens the same folder like Photolab has a habit to do.

I don´t always do that myself since I rarely have that many pictures in my picture folders. Today I it took about 10 seconds to open a folder with 500 Sony ARW raw-files and I have no problem with that.

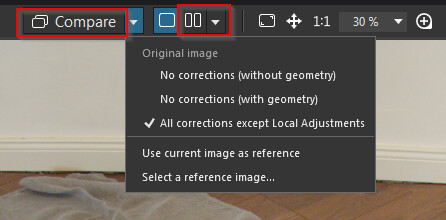

Normally I never use the method of selecting the premade AI-presets in the list either since it - at least in my computer - takes about five times longer to handle per picture when exporting and probably takes the same kind of extra time and resources when opening a folder, than when I use the other method of just pointing and clicking “on freehand” to create the masks.

As I see it I see only one reason to use the other method with premade presets and that is IF and just IF you want to copy your AI-masks from one picture and paste them to a selection of other files (works just with the premade ones in the drop down list of some reason). Only with that method AI will apply the masks correctly on the destination files.

I have worked a lot with my animal pictures the last days and it works really well and efficient. I have no problems at all to export either. I don´t think you either need to have these problems if you adapt to the conditions we live under for the moment.

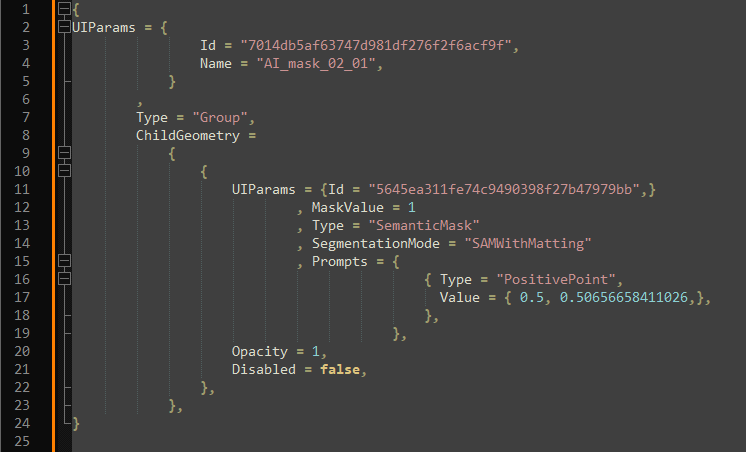

I use 2-3 masks per picture normally and do what most people do I guess,

This was made with three masks, one for the animal, one for the sky and one for the rock.

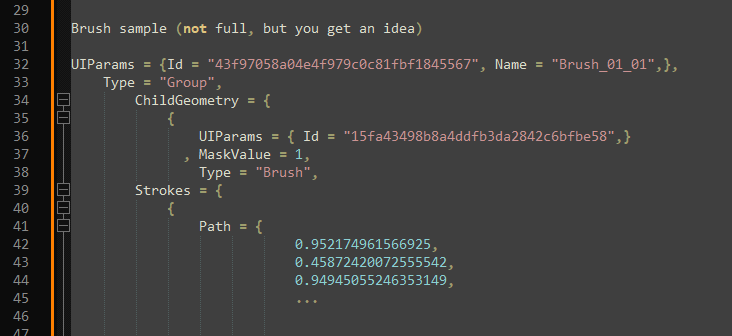

The other with one mask for the bird and one Control Line