@sgospodarenko @Joanna @platypus @KeithRJ I apologise for my error but it was prompted by an issue whereby PL5 appeared not to be keeping up with C1 during a test and I introduced additional software to see “who” was telling the truth and “who” was lying! Unfortunately introducing new software only created more problems in the shape of sidecar files etc!

The following test was conducted using WIN 10, files no longer on a flash drive but now back on an HDD.

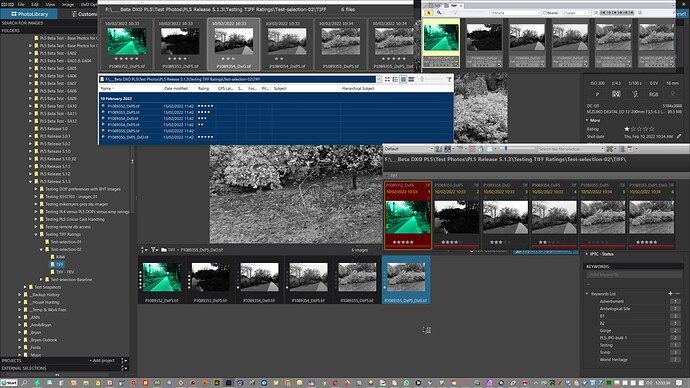

Photo Mechanics (PM) and PL5 were the “Raters” and IMatch, FastRawViewr(FRV) and PIE the “monitors”. IMatch updates automatically but with a slight delay; FRV and PIE only update after a Refresh command.

The ‘Rating’ values for the TIFF files (and there lies the “large” problem) were all set to 1* by PL5 before the other software were targeted at the data now on the HDD. PM was then used to change the ‘Rating’ of the 6 photos changing the Rating’ to 5, 4, 3, 2, 0, 5 as quickly as PM would allow.

With the ‘Sync’ option set PL5 kept up with the first two changes, then missed two, caught one and missed the final change. All the other software then caught up automatically or after a Refresh.

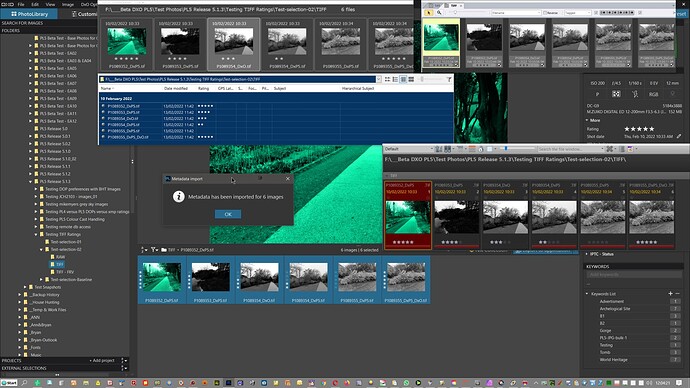

PL5 then needed a ‘File’/‘Metadata’/‘Read from Image’ command (effectively a refresh, albeit one that will update the database, update the DOP and update the display) after selecting all the photos to ensure that it finally “caught up”.

I realise that when setting the ‘Sync’ option there is a warning and the issue is exacerbated with the tests carried out here by the size of the TIFF files being used, they are far larger than anything I have used in testing before.

Because of problems with ‘Rating’ going missing I have been suggesting PL5 users use the ‘Sync’ option to ensure that the ‘Rating’ makes it off the database and out to external storage, but now what do I suggest, what should the PL5 workflow look like to ensure that it is easy most of the time but also totally secure?

Hence, I was disappointed that PL5 could not keep up and that manual intervention was needed. IMatch sometimes seems a little “ponderous” to me but it didn’t miss the changes made by PM and got there in the end without any help from me!

I am concerned about the detection mechanism used in PL5 that captured some changes and then when “things got rough” missed changes. I am also concerned about whether PL5 uses “brute” force with the ‘Medtadata’/‘Read from image’ (in truth the update on my 6 images was quick but I am not offering to repeat the exercise with hundreds of TIFFs) and at that point I am out of my depth!

How could PL5 have avoided this problem I am not sure, how “heavy” is the touch that PL5 exerts when reading metadata from a large directory of TIFFs I am not sure either but the first thing we need to know is how PL5 does what it does - that old question of feedback and a two way conversation!

I have bent my credibility a bit with the item that started this topic but that is “only” my pride that is damaged, if users run into the problem I encountered here (as a result of my or other people’s recommendations) then that is a whole different thing!!!

EDIT:- I took delivery of a new NVME today so I saved 880 photo RAWS in PM with ‘Rating’=1* as TIFFs. These turned out to be rather small TIFFs about 23MB.

I then ran a batch task in PM and set ‘Rating’=5* while the directory was open in PL5 and all were updated to the new 5* rating in PL5 successfully The PL5 DB is also residing on the NVME.

The test above needs to be repeated on HDD.

Both tests then need to be repeated with much larger TIFF files just to see what it takes for PL5 to be unable to keep up.

Running tests like this doesn’t actually help to justify the expense to my wife but…

Putting the photos on an HDD and then moving the database to its location on a USB 3 connected Sata SSD did not change the results. PL5 certainly ran a long way behind the PM ‘Rating’ change but there were no wrong ‘Rating’ values at the end of the tests.

End Edit