If DxO is monitoring this thread, I would be interested in an explanation of why the raw image size doesn’t seem to affect deep prime processing. That is, processing times are very similar for large Sony A7RV raws and much smaller OM-1 raws. Processing is a bit faster for the OM-1 raws, but not by much. I can monitor CPU load while processing, and it looks like DxO isn’t “trying hard” for the OM-1 images - CPU load tends to be low. Processing the A7RV raws pins the CPU load at 100%.

I, too, expected similar results from base, Pro and Max processors, because they share the same Neural Engine. But, my new M1 Pro 14" MBP and M1 Max Studio both cut DPXD processing time by 50% compared to my previous M1 MBP and M1 mini. The MBP and mini both have 16GB RAM. It seems DP has become less reliant on the Neural Engine and better at leveraging additional GPU resources when they’re available. Interestingly, the MBP and Studio perform almost identically on my test set of 33MP and 61MP RAW files.

Thanks for your insights @Jacques4242 , interesting to read.

I wonder if the extra memory bandwidth the Pro, Max and Ultra have over the base M1 are coming into play with this then too and helping speed up the processing due to faster data throughput? I agree, it certainly looks possible that the extra GPU cores in the Pro/Max/Ultra make using the GPU preferable to using the NE?

Hard to be certain without testing the performance with the machines set to use specifically either the NE or the GPU.

I found this page that listed the differences between all the M1 SoCs:

Compared: Apple Silicon M1 vs M1 Pro vs M1 Max vs M1 Ultra | AppleInsider

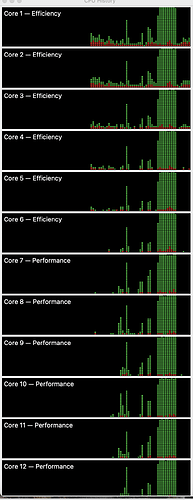

There is other things going on. In an earlier post in this thread I wondered about why processing of the OM-1 raws wasn’t much faster than the A7RV raws - given that the OM-1 raws are 1/3 the size of the Sony raws. I took a look at the individual CPU core load on my M3 MBP16 and it looks like processing the smaller raws the system doesn’t use the P cores as much. When processing the A7RV raws, all 12 cores are pushed to 100% (E and P). For some reason, DxO “doesn’t try as hard” for the smaller raws. You can open the Activity Monitor and view CPU history - which displays the load on each cpu. It is quite clear that processing is different in the two cases.

Thanks for sharing, it’s great that there is continual improvement in hardware to take advantage of programs like DXO

At the moment I’m using an M1 MacBook Pro to process Canon R 5 Raws with DXO 5

It takes normally 11 seconds to process 1 raw file to a tiff which was a huge improvement for me for processing with DXO when I replaced my elderly PC with a MacBook Pro a while ago

I usually run around 5 images at a time so don’t really need to update

That is unexpected!

I need to have a look at what happens with different sized raw files on my M1 Mac Mini now ![]()

If you want to see what I saw, I have attached a screen capture of the 12 CPU cores, first processing the OM-1 raws, and then the A7RV raws. You can tell when I started processing the A7RV raws because all the cores went to 100%.

I have a theory (actually just a WAG). I think DxO splits up raw images into segments and then processing each segment in a separate thread. Say, DxO is using 10 mPix “segments”. When I process the OM-1 raws, it splits each image into two segments and processes each segment in a separate thread. When I process the A7RV raws, it creates 6 segments and processes in six threads. The segments are combined for output. If your hardware can support the six concurrent threads, then the A7RV processing will be (almost) as fast as the OM-1 processing. I have no idea if this is correct, but it does describe the processing behavior.

It was just a quick, and no doubt sloppy, test on the spur of the moment (ISO in the range 400 to 2000, so not terribly noisy), using files that happened to be to hand, but this talk of M1 versus M3 made me wonder what the times might be on an M2. It’s a Studio with M2 Max and 32GB RAM. Ten ORF files processed with DeepPrimeXD in approx 1m 5s.

Thanks for those tests.

I have a MBP M1 Max ; I took the Max for faster processing and I discover it was no use, since DxO uses neural engine !

My is M1 was 60x faster than my previous MBP 2012 (yes 60x) and so much more silent.

I needed 8 TB for my data ; too much expensive as internal storage, so I have 1 TB internal and 8 outside. Good to separate data and system but with some issues :

- sometimes the mounting point of external SSD changes,

- I must stop the computer to dismantle the external drive

- presumably slower than internal storage.

Sometimes I have to quit « preview.app » because for some reason a file is still open on my external drive even if the window is closed (but the app still running).

So if you can not dismount your external drive try to quit all running Apps and retry.

I’ve explained myself badly.

If I want to put my computer in a bag, the connector of external SSD can’t stay plugged in.

so I have to quit all the applications to dismantle the hard disk.

Of course for moving through house, it is not necessary.

With internal storage, the very good autonomy would allow to let the computer in standby.

One thing you might try: A Transend JetDrive (they are available on Amazon). I have a 1 TB JetDrive on my MBP16 that is used for Time Machine backups. It stays in the MBP so no unplugging/plugging in is necessary. It’s not fast - but works fine as a Time Machine backup target.

David

When processing Sony RAW files, time correlates to pixel count, e.g. my 33MP RAWs take roughly half the time of my 61MP RAWs